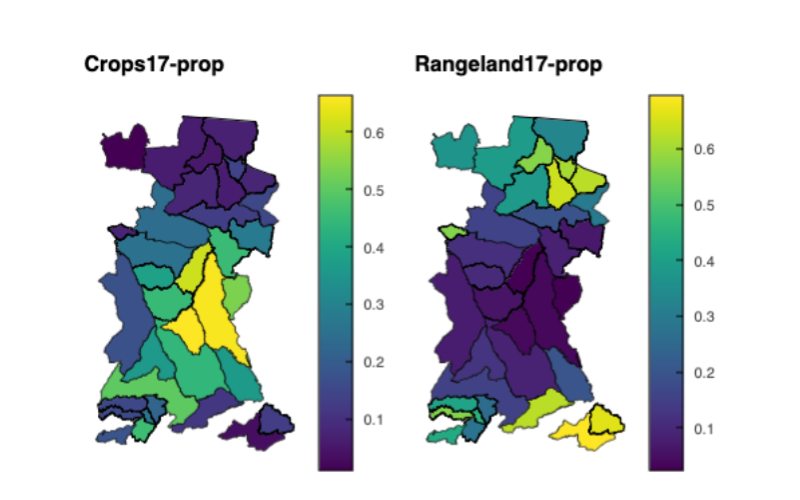

Exploring Land Cover Data (Impact Observatory)

How to run¶

Running on Binder¶

The notebook is designed to be launched from Binder.

Click the Launch Binder button at the top level of the repository

Running locally¶

You may also download the notebook from GitHub to run it locally:

Open your terminal

Check your conda install with

conda --version. If you don’t have conda, install it by following these instructions (see here)Clone the repository

git clone https://github.com/eds-book-gallery/b128b282-dee7-44a7-bc21-f1fd21452a83.gitMove into the cloned repository

cd b128b282-dee7-44a7-bc21-f1fd21452a83Create and activate your environment from the

.binder/environment.ymlfileconda env create -f .binder/environment.yml conda activate b128b282-dee7-44a7-bc21-f1fd21452a83Launch the jupyter interface of your preference, notebook,

jupyter notebookor labjupyter lab

- Millington, J. (2025). Exploring Land Cover Data (Impact Observatory) (Jupyter Notebook) published in the Environmental Data Science book. Zenodo. 10.5281/ZENODO.8311800